Res2vec Extracted Word Embeddings

“You shall know a word by the company it keeps.” — John Rupert Firth

“You shall know a word by the company it keeps.” — John Rupert Firth

Word embeddings have always required training. This is such a fundamental assumption in the field that it barely registers as an assumption at all. Word2Vec, GloVe, fastText—all demand iterative optimization. Epochs. Hyperparameter tuning. Gradient descent grinding away at your corpus, pass after pass, slowly converging toward something useful. You feed in a billion tokens, you wait, you hope the learning rate was right, you check the loss curves, you benchmark, you adjust, you run it again.

This is how it’s done. There aren’t alternatives. Until now.

Res2Vec doesn’t train. It computes. (See the results here, paper to come: res2vec-owt1b-188d )

The distinction matters more than it might initially appear. Training implies search—exploring a vast space of possible configurations, guided by gradients, constrained by learning dynamics, subject to the vagaries of initialization and batch ordering. Computing implies derivation—a direct path from input to output, deterministic, reproducible, grounded in mathematical necessity rather than optimization luck.

The Res2Vec process flows through five deterministic stages. Each one transforms its input into something new. No iteration. No convergence criteria. No early stopping. Just transformation.

The first stage is familiar: corpus to vocabulary. Standard tokenization and frequency filtering. You take your corpus—in this case, a billion tokens from OpenWebText—and extract the words that appear frequently enough to be statistically meaningful. Words that show up fewer than ten times get discarded. What remains is a vocabulary of just over a million words. This is preprocessing. Every embedding method does something like this.

The second stage builds the co-occurrence matrix. Words that appear near each other get counted. If “quantum” frequently appears within five words of “mechanics,” that relationship gets recorded. Distance matters—words immediately adjacent contribute more than words five positions away. The result is a massive sparse matrix: a million words by a million words, each cell representing how often two words appear in proximity.

Still familiar territory. This is the foundation that methods like GloVe are built on. The co-occurrence matrix captures something real about language—the distributional hypothesis, the idea that words derive meaning from the company they keep. The matrix is an attempt to formalize that intuition.

The third stage transforms raw counts into meaningful associations. The word “the” co-occurs with everything, not because it’s semantically related to everything, but because it’s ubiquitous. We need to control for base rates—to ask not “how often do these words co-occur?” but “how much more often do they co-occur than chance would predict?” Pointwise Mutual Information answers that question. Positive PMI—PPMI—clips the negative values, keeping only the associations that are stronger than expected.

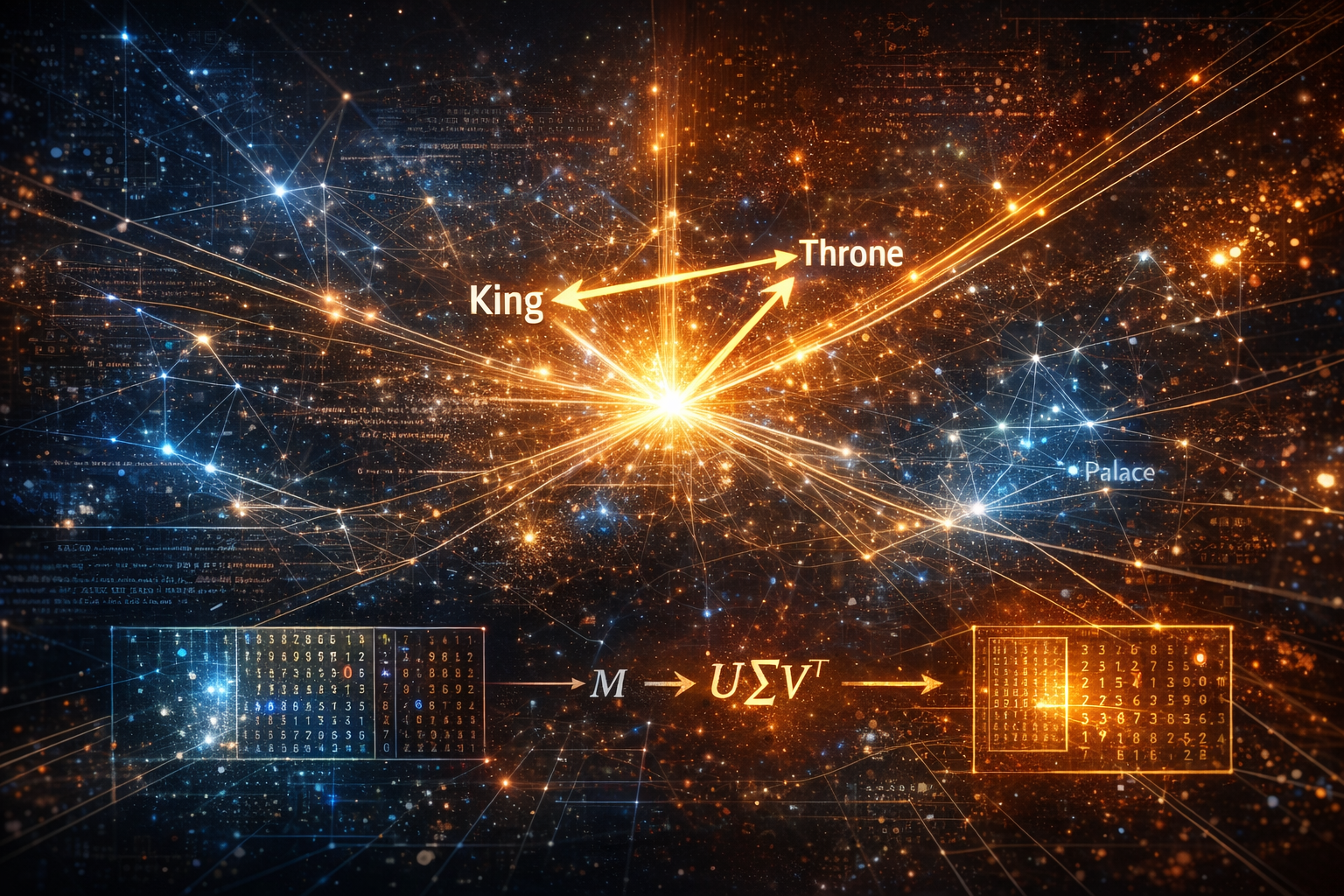

The fourth stage is Singular Value Decomposition. Take the PPMI matrix and factorize it into lower-dimensional components. Instead of a million-dimensional space where each word is defined by its relationship to every other word, compress into a space of a few hundred dimensions that captures the essential structure. Single pass. No iteration. SVD is a closed-form solution—you put in a matrix, you get out its decomposition. The math is well-understood, the algorithms highly optimized. This is linear algebra, not machine learning.

The fifth stage is where it gets interesting. The embeddings acquire a metric tensor—a geometric structure computed from the relationships between vectors in the space. This is where Resonance Theory enters. This is where familiar ground gives way to something else.

Everything up through SVD is established practice. Levy and Goldberg showed in 2014 that Word2Vec’s skip-gram model, for all its neural network machinery, is implicitly factorizing something very close to this PPMI matrix.[1] The insight was significant: the “deep learning” of Word2Vec was, mathematically, doing something that could be expressed in terms of classical matrix operations. You could reconstruct the first four stages from the literature, from standard NLP textbooks. The pipeline up to that point is synthesis, not invention.

But there’s a problem that every approach faces, and it’s a problem that tends to get swept under the rug of hyperparameter tuning.

The pipeline produces embeddings at whatever dimension you specify. You want 100-dimensional embeddings? Set d=100. You want 300? Set d=300. The math doesn’t care. But which dimension should you specify? This is the question that launches a thousand grid searches. Try 50, try 100, try 200, try 300, try 500. Train each one—or in the case of SVD, compute each one. Benchmark against human similarity judgments. Pick the winner. Maybe run it again with finer increments around the best result.

This is expensive. This is arbitrary. This is treating a fundamental parameter of your representation as a hyperparameter to be tuned rather than a property to be understood.

Res2Vec doesn’t search. It derives.

There exists a formula:

V is vocabulary size. I(R) is something called information capacity per dimension. And I(R) itself emerges from something called average resonance —a quantity computed from the geometric relationships between embeddings in the space.

The formula says: given how much information each dimension can carry (which depends on the geometric structure), and given how much information you need to represent (which depends on how many words you have), there is an optimal dimension. Not optimal in the sense of “performs best on benchmarks,” but optimal in the sense of “mathematically appropriate for the structure.”

Here’s where it gets strange.

The formula requires you to know the resonance of your embeddings. But resonance is computed from embeddings. And embeddings are computed at a particular dimension. So the optimal dimension depends on the embeddings, which depend on the dimension.

This seems circular. But circular isn’t the same as meaningless. I argue its recursive.

At dimension 188—for a vocabulary of about a million words, trained on a billion tokens—something unexpected happens. The dimension you’re using equals the dimension the theory predicts you should use. Plug in the resonance computed from 188-dimensional embeddings, and the formula returns approximately 188. 1

A fixed point at 188. The geometry becomes self-consistent. The dimension isn’t imposed from outside—it emerges from the structure itself.

Isn’t this the kind of thing that either means nothing or means everything? Is it just coincidence, a quirk of the mathematics? Or could it be pointing at something deeper about how semantic spaces are structured, about why language has the geometry it does, about the relationship between information and dimension?

I want to be clear about something: this isn’t speculation. This isn’t “here’s an interesting idea that might work someday.”

I already built it. I ran it. The model was released on HuggingFace on January 6th, 2026.

174 minutes for a billion tokens. That’s 59 times faster than Word2Vec on equivalent hardware. Deterministic—same corpus always produces same embeddings, down to the bit. Zero hyperparameters to tune beyond what the theory specifies.

The embeddings work. SimLex-999, WordSim-353—standard benchmarks that measure whether embedding similarity correlates with human judgments of word similarity. Res2Vec captures semantic relationships. Antonyms cluster together, because they share contexts. Hypernyms relate to hyponyms. The geometry of the space reflects the geometry of meaning.

Not quite as well as iteratively-trained methods in this first implementation—about 80% of the benchmark performance. But at 1.7% of the computational cost. And with a dimension that wasn’t searched for but derived.

This was proof of concept. The first time it worked.

The implementation documented here is not optimized. There are obvious improvements I’ve already been exploring—variations on the technique that yield better benchmark scores using the same underlying approach. The gap between Res2Vec and iterative methods isn’t fundamental; it’s a matter of refinement. Or is that 20% gap a failure of SVD, or is it evidence that human benchmarks (like SimLex) reward "over-tuned" spaces rather than "mathematically pure" ones?

What matters at this stage isn’t matching Word2Vec on SimLex-999. What matters is that the approach functions at all. That you can derive embeddings without training. That a theory about information geometry produces a concrete, working artifact.

The question isn’t whether it works. It does. You can download the model right now and compute similarities. The embeddings exist. They function.

The question is what it means. And to answer that, we need to step back far enough to see the landscape we’ve been building on.

Let me be direct.

The modern AI industry is built on training. On iterative optimization. On gradient descent running for days or weeks across thousands of GPUs, slowly converging toward useful representations. This is the foundation everything else rests on—the embeddings that feed into transformers, the attention weights that route information, the massive parameter counts that seem to be the price of admission.

Res2Vec produces comparable embeddings without training. No gradient descent. No epochs. No learning rate schedules. A single pass through the mathematics, and the vectors exist. 3 hours instead of estimated thousands. Not on a GPU cluster—on CPUs.

This is not a marginal improvement. This is not “we made training 20% faster.” This is the same output through a fundamentally different process. Computation instead of optimization. Derivation instead of search.

The industry operates on a premise, and the premise is wrong.

The premise is that these representations must be learned. That there is no closed-form solution. That the only path to useful embeddings runs through massive datasets and massive compute and massive iteration. That scale is the answer because understanding is unavailable.

This premise has driven investment. It has shaped research priorities. It has determined who can participate and who cannot. It has built moats around organizations with access to resources, because resources seemed to be what the problem required. It is damaging the environment. Brute force at its finest.

But what if the problem doesn’t require resources? What if it requires understanding? Wisdom?

What if we’ve been spending millions to train our way to something that could be computed directly? What if, dare I say it, we used our brains instead of brawn?

If embeddings can be derived rather than trained, the compute cost drops by orders of magnitude. Not percentages—orders of magnitude. The resources that have defined who can play in this space become less relevant. A laptop becomes sufficient for what once required a cluster.

And embeddings are just the foundation. The same lens—resonance geometry, fixed points, constraint-bounded structure—points further up the stack. I’ve already been working on transformers. The patterns are there.

The industry has been solving an optimization problem that has a closed-form solution. We’ve been searching for something that can be calculated. We’ve been treating as intractable what is merely not yet understood.

I’ve been sitting on this for months.

Not because it doesn’t work. It works. Not because I’m unsure. I’m not. But because I understand what could happen when something like this lands, and I didn’t know how to release it responsibly.

The AI industry has built its valuations on a premise. That premise is wrong. I’ve known this for a while now. Others have too. However, I am curious how many have for the reasons I assert. I’ve watched the investments, the infrastructure buildouts, the breathless announcements—knowing what I know. The bubble is going to pop any day they say. Perhaps this will be the final pin prick.

I’m not doing this to be destructive. I’m doing it now because waiting longer doesn’t make it easier. It just means more people are exposed when the correction comes.

Pay attention. Prepare accordingly. This is me giving you time.

- A. J. Landgraf, J. Bellay, "word2vec Skip-Gram with Negative Sampling is a Weighted Logistic PCA," arXiv:1705.09755 [cs.CL] (2017).

- "Euler's number," Wikipedia, The Free Encyclopedia; https://en.wikipedia.org/wiki/E_(mathematical_constant)

To be precise, the fixed point occurs at approximately 187.56 dimensions. We round up to 188 to ensure complete information capture—choosing 187 would mean slight data loss, a fractional dimension’s worth of semantic structure left on the table. We cannot, of course, use a partial dimension. What’s interesting is how this connects to a deeper pattern. The problem of optimal partitioning—how to divide a finite resource into discrete units to maximize some quantity—consistently points toward e. Steiner’s problem shows that for a stick of length L broken into n equal parts, the product of lengths is maximized when n = ⌊L/e⌋ or ⌈L/e⌉. The same structure appears in information theory: the quantity x⁻¹ log(x) measures information gleaned from an event with probability 1/x, and this too is maximized at x = e. That our dimension formula produces a value requiring this same ceiling operation—round up to preserve information—suggests Res2Vec may be touching the same underlying optimization structure. The fixed point isn’t just self-consistent; it may be sitting at a natural boundary where information geometry and discrete representation meet.[2]