The Geometry of Semantic Meaning

“It is the glory of geometry that from so few principles, fetched from without, it is able to accomplish so much.” — Sir Isaac Newton

“It is the glory of geometry that from so few principles, fetched from without, it is able to accomplish so much.” — Sir Isaac Newton

As I think about where AI is and what we are all talking about, there seems to be a gap in the literature. It isn’t the geometry of AI systems, that is not a new concept. It is its apparent link to quantum mechanics that many might have overlooked. This, I hope, will give some background on where Resonance Theory comes from as I start to share more of the actual math (don’t worry, its not too bad). I am just not sure how to introduce something this different. How do you introduce a meta-theory with math? I don’t think this has been done exactly like this before. I am learning as I go.

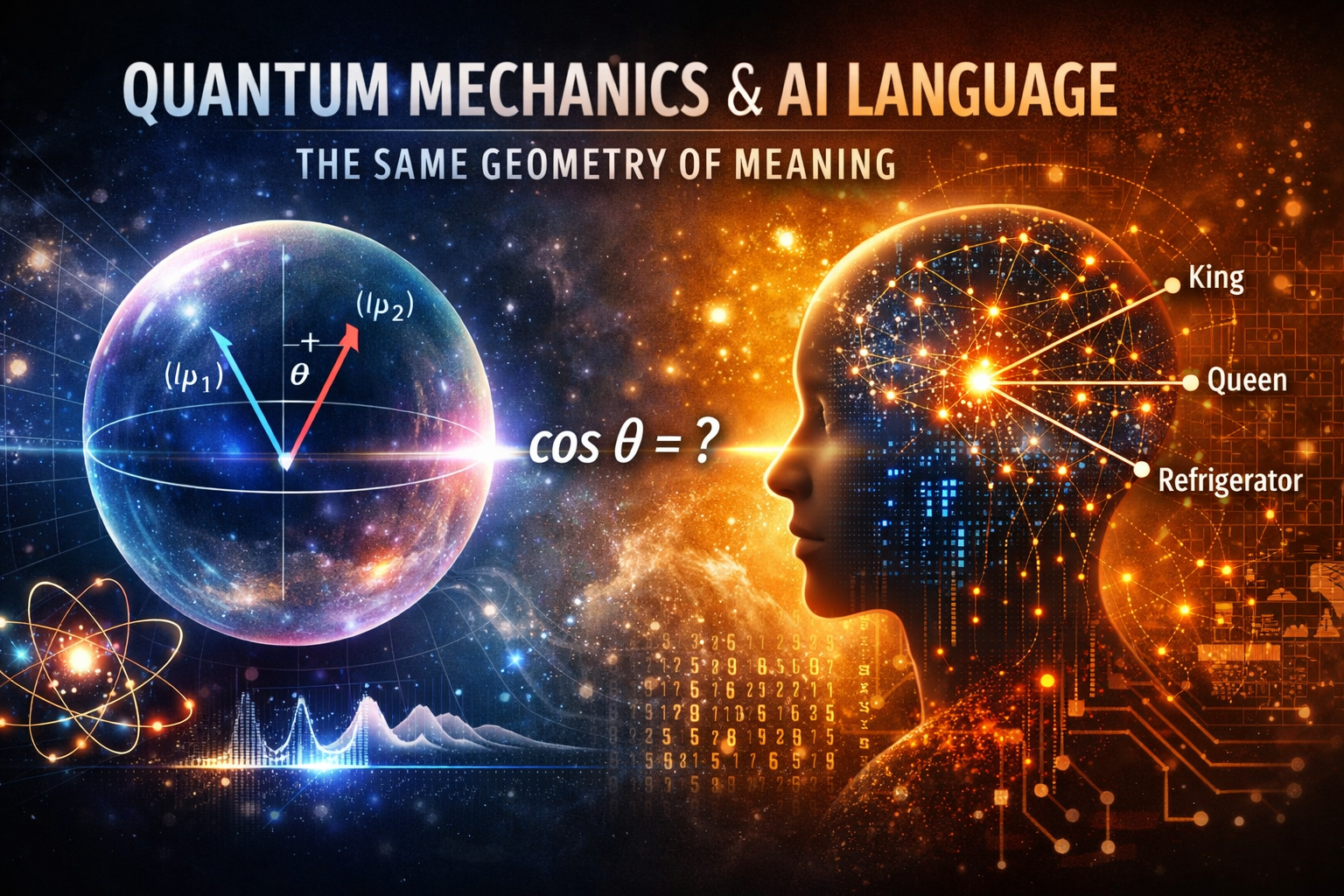

The mathematical parallel between quantum mechanics and human language rests on a surprisingly simple foundation: both systems use the same fundamental operation to determine relationships between their basic elements.

The Core Operation: Comparison Through Angle

In quantum mechanics, the relationship between two states is determined by their inner product—essentially, how aligned they are in a geometric space. When you have two quantum states represented as vectors, the probability of measuring one given the other is the cosine of the angle between them, squared. The states themselves live on a sphere (the Bloch sphere for a qubit), and their geometric relationship determines their physical relationship.[1]

Word embeddings discovered the same structure independently. When Word2Vec, GloVe, or any modern embedding method places words in a high-dimensional space, the meaning-relationship between words is captured by the cosine of the angle between their vectors. “King” and “queen” are close (small angle, high cosine); “king” and “refrigerator” are far (large angle, low cosine).[2]

The difference is the square. Quantum mechanics needs probabilities—values that sum to one—so the Born rule squares the inner product. Word embeddings need only similarity—how close, not how likely—so the cosine stands alone. The underlying geometry the same; the square is just what physics requires to convert alignment into measurement outcomes.

This is not a loose analogy. The mathematics are identical: normalized inner product in a vector space.

States, Superposition, and Polysemy

A quantum state can exist in superposition—multiple possibilities held simultaneously until measurement forces a definite outcome. Words operate identically. The word “bank” exists in semantic superposition (financial institution, river edge, billiard shot, aircraft maneuver) until context collapses it to a specific meaning. Context functions as measurement. Anthropic has done some cool work here with toy models.[3]

Both systems require a Hilbert space—a complete vector space with an inner product—to function coherently. Quantum states live in such spaces; so do concepts, when formalized properly. My work with Res2Vec demonstrates this concretely: word embeddings trained on a billion tokens achieve a natural “fixed point” at 188 dimensions where the geometry becomes maximally clean, and the similarity function reduces to pure cosine—identical to the quantum mechanical inner product.[4]

Static relationships are only part of the picture. Meaning doesn’t just sit there—it combines. And when you look at how meaning combines across different fields, the same geometric structure appears.

Vector arithmetic provides the cleanest demonstration:

This is not retrieval. This is composition—taking semantic elements and combining them to produce new meaning through literal vector addition. The relationships between concepts are preserved under transformation because they are geometric relationships.[2]

Cognitive linguistics arrived at the same structure through different means. Fauconnier and Turner’s work on conceptual blending showed that when humans combine meanings—metaphor, analogy, novel concepts—the process follows geometric rules: projection between mental spaces, selective inheritance of structure, emergent meaning at the intersection.[5]

They weren’t doing linear algebra. They were studying how people think. They found geometry anyway.

Chomsky’s minimalist program narrowed the core of human language to a single operation: MERGE. Take two elements, combine them into a new structure, repeat recursively.[6]

Stripped of the biological framing, MERGE is a geometric operation—it creates hierarchical structure through combination, exactly what vector composition does in semantic space.

Quantum mechanics composes systems through tensor products—another geometric operation that combines state spaces while preserving their internal structure. When two quantum systems interact, their joint state lives in the product of their individual spaces, and the geometry of that product determines what measurements are possible.

Four fields. Four different starting points. Same conclusion: composition is geometric. Meaning combines the way vectors combine—not metaphorically, but mathematically.

Why does his happen? The deeper answer is it appears both physics and language are systems for comparing states under constraints. Quantum mechanics compares possible configurations of physical systems; language compares possible meanings under contextual constraints. The mathematical structure that emerges when you require consistent, reversible, well-behaved comparison is the same in both cases—there are only a few ways to do it coherently, and nature and language both found the same one.

Human understanding is, operationally, measuring angles between concepts.

- IBM Quantum, "Density Matrices: Bloch Sphere," General Formulation of Quantum Information (IBM Quantum Learning)

- T. Mikolov, K. Chen, G. Corrado, J. Dean, "Efficient estimation of word representations in vector space," arXiv:1301.3781 [cs.CL] (2013)

- N. Elhage et al., "Toy Models of Superposition," arXiv:2209.10652 [cs.LG] (2022)

- D. Grey, "res2vec-owt1b-188d," Hugging Face (2025)

- G. Fauconnier, M. Turner, The Way We Think: Conceptual Blending and the Mind's Hidden Complexities (Basic Books, New York, 2002)

- M. D. Hauser, N. Chomsky, W. T. Fitch, The faculty of language: What is it, who has it, and how did it evolve? Science 298, 1569–1579 (2002)